Level Tweaks Automation at Scale

How do you keep 200 million players happy, every single week?

Candy Crush Saga has been running for over 12 years. That's 20,000+ levels — all of which need to keep working for today's players. Design standards evolve, player expectations shift, and levels built in 2014 don't play the same way in 2025. Maintaining the quality of this back catalogue at scale, while simultaneously releasing 65 new levels every week, is one of the game's biggest operational challenges.

I was brought in to map this workflow across teams and tools, understand why it had become so fragile, and identify where automation and product design could actually reduce the complexity. Internal interfaces shown later are reconstructed to respect NDA constraints, but the workflow and design logic are accurate.

Candy Crush's 20,000+ levels need constant maintenance

A “tweak” is any adjustment to a level's parameters — changing the move count, reducing candy colours, swapping a blocker type, adjusting objectives — to bring player performance metrics back to target. Each change is small. But across 20,000+ levels, with new ones shipping every week, this becomes a continuous operation that touches difficulty, engagement, retention, and monetisation simultaneously.

Live Gameplay

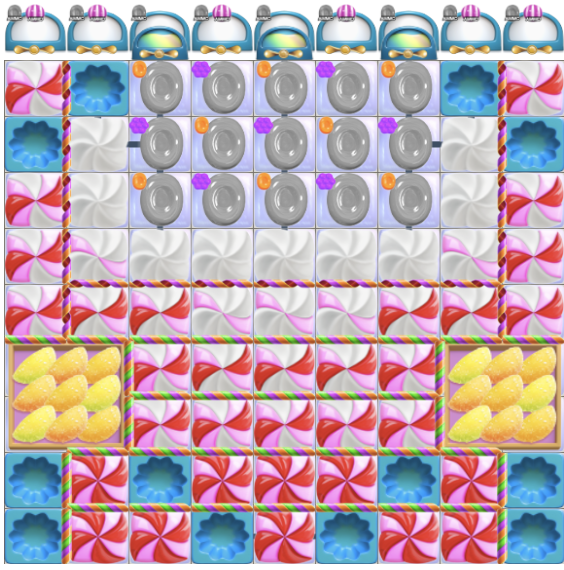

Original level

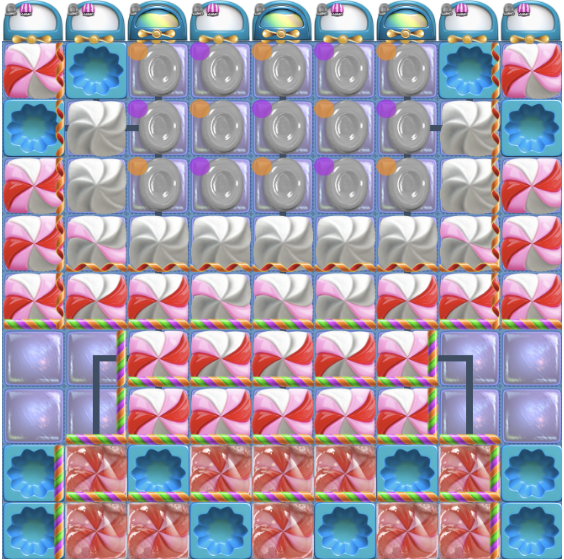

Current level

Changes

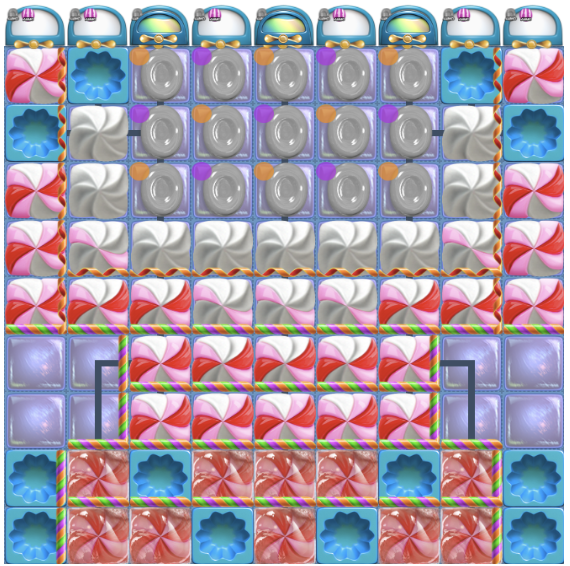

Variant 1

Impact

Changes

What makes a good Level?

A level designer has to juggle different tools to balance these vectors — for thousands of levels, every single week.